-

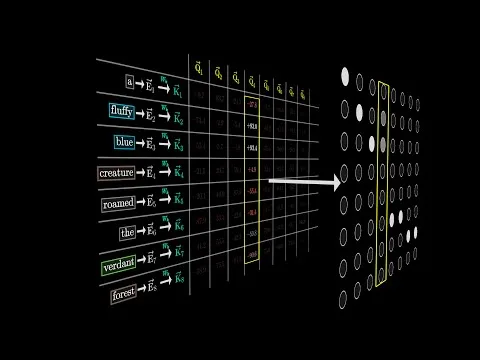

Attention in transformers, visually explained

Demystifying attention, the key mechanism inside transformers and LLMs. We use our structured data in KChat to produce better results using the attention mechanism adding context and relevance to our data. [00:02] Transformers use attention mechanis...Demystifying attention, the key mechanism inside transformers and LLMs. We use our structured data in KChat to produce better results using the attention mechanism adding context and relevance to our data. [00:02] Transformers use attention mechanis...

Page 1 of 1

Powered by Optimal Access